The AI landscape isn’t just shifting; it’s expanding. While the cloud remains the powerhouse for massive scale, businesses now have multiple options for where their intelligence lives. Foundry Local – part of the Microsoft Foundry

ecosystem – allows you to browse and deploy high-performance AI models directly on your local hardware, bridging the gap between cloud innovation and on-premises control.

Why Foundry Local Fits the Microsoft Stack

By using local models, companies can choose the best environment for each specific task. Whether you need the sheer scale of the cloud, or the privacy and speed of the edge, Foundry Local provides the tools to run Large Language Models (LLMs) and vision models efficiently on-premises – often without the need for expensive, high-end GPUs.

- Hybrid Flexibility: Use the same SDKs and APIs for both local and cloud deployments. You can prototype locally for free and scale to Microsoft Foundry in the cloud with minimal code changes.

- No “GPU Tax”: While Foundry Local supports NVIDIA CUDA, it also leverages OpenVINO and WebGPU. This means it can squeeze impressive performance out of standard Intel/AMD integrated GPUs and even NPUs found in modern “AI PCs” and laptops.

- CPU Optimization: For lighter tasks like text classification or simple chat, many of the smaller models (like the Phi-3.5 family) run really well on standard CPUs, making AI accessible without a specialized hardware budget.

- Seamless Governance: When paired with Azure Arc, you can manage, monitor, and update your fleet of local AI deployments directly from the Azure portal, maintaining enterprise-grade control over edge devices.

Industry Spotlight: Local Apps in Action

The ability to execute AI locally is a game-changer for sectors where data security and real-time processing are non-negotiable, or where the regulatory appetite doesn’t lend itself to cloud:

- Manufacturing Intelligence: Process telemetry and sensitive factory data entirely on-premises. Predicting equipment failure locally keeps plants running without a constant external connection.

- Healthcare Compliance: Build HIPAA-compliant transcription services that keep patient data within the four walls of the clinic. Although a US standard, gives rise to UK equivalents.

- Finance & Government: Perform instantaneous fraud detection or analyse classified data within air-gapped environments, ensuring absolute data sovereignty.

AI as a Managed Service

With managed IT services, AI is no longer a separate silo; it’s a core component of your managed infrastructure. Integrating Foundry Local into your ITaaS strategy allows for:

- Predictable Costs: Move away from volatile “per-token” cloud billing. Once deployed on your local fleet, the “compute cost” is already covered by your existing hardware lifecycle.

- Simplified Governance: Manage local AI deployments with the same standards as your desktop or server images.

- Edge Reliability: Ensure that mission-critical AI tools remain available even if the primary internet circuit goes down.

The Holistic AI Stack: Cloud, Hybrid, and Local

A truly integrated strategy uses the cloud for “heavy lifting” and the edge for “daily intelligence.”

- OpenAI (The Frontier): Leverage the massive scale of the cloud for complex reasoning, large-scale data analysis, and creative generation that requires high-concurrency compute.

- Microsoft Foundry (The Orchestrator): Your central control plane. It allows you to manage agents, switch between thousands of models, and enforce enterprise-grade governance across your entire organization.

- Foundry Local (The Secure Edge): The “private” layer of your stack. Run models like Phi-4 or Llama 3.2 directly on your local hardware to handle sensitive workloads with zero latency and high data sovereignty.

The Powerhouse Models Under the Hood

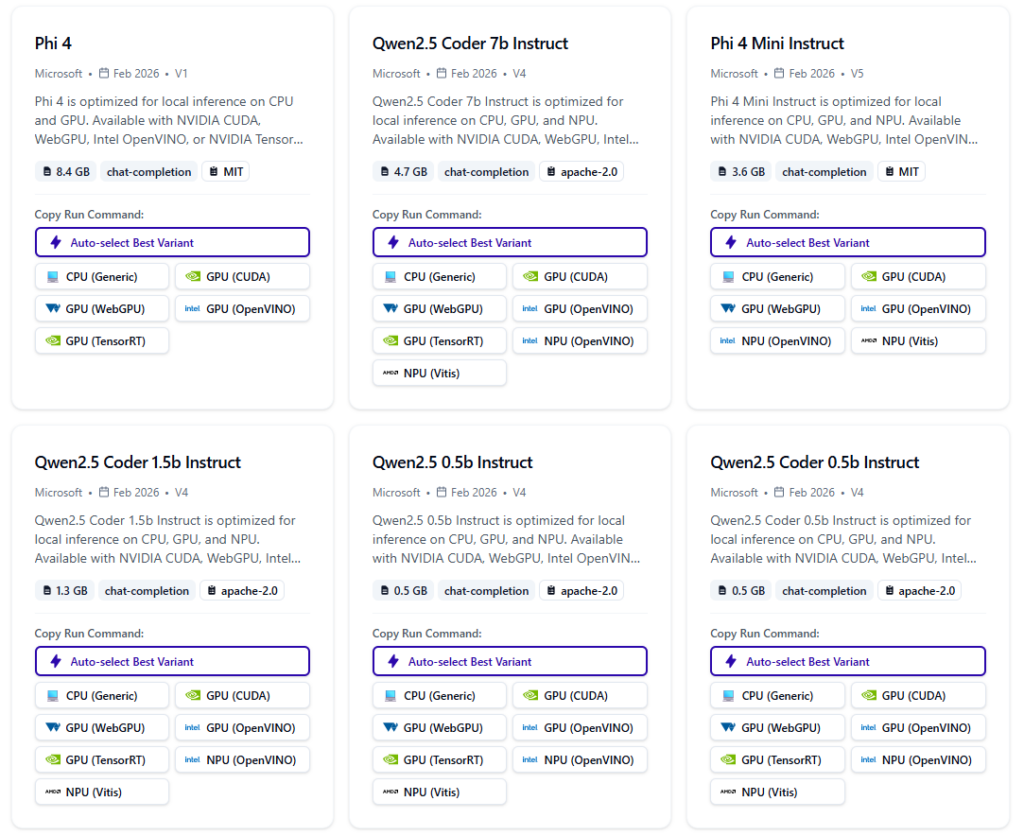

Foundry Local supports a curated selection of “Small Language Models” (SLMs) and specialised vision models that are optimised for efficiency. These aren’t just “lite” versions; they are highly capable tools designed for specific tasks:

| Model Family | Use | Hardware |

|---|---|---|

| Phi-4 & Phi-3.5 | Microsoft’s flagship SLMs. Incredible at reasoning and logic despite their small size. | Optimized for NPUs and standard CPUs. |

| Mistral 7B | The “gold standard” for open-weight models. Great for general-purpose chat and instruction following. | Runs beautifully on Intel/AMD GPUs. |

| DeepSeek R1 | Exceptional at coding and mathematical reasoning. Ideal for local developer tools. | High performance on NVIDIA RTX cards. |

| Llama 3.2 (Vision) | Allows you to “see” and “describe” images. Perfect for quality control on factory floors. | Leverages OpenVINO for integrated graphics. |

What Can You Actually Achieve?

The goal of Foundry Local isn’t just to “run AI” – it’s to solve specific business problems where public cloud is not an option:

- Zero-Latency Quality Control: A camera on a production line identifies a microscopic crack in a part. The system triggers a physical stop in less than 50ms – faster than any cloud API could respond.

- Private Knowledge Bases: A law firm or bank loads thousands of confidential files into a local instance of Phi-3.5. They can now “chat” with their documents to find precedents without any files ever leaving their internal server.

- Offline Productivity: A field engineer at a distillery uses a rugged laptop equipped with an NPU to run DeepSeek. They can get complex troubleshooting advice entirely offline.

- Cost-Effective Transcription: Instead of paying per-minute API fees, a medical clinic or customer service hub runs local transcription, bringing recurring AI costs to nearly zero.

Build Your Local AI Roadmap Together

Implementing local AI isn’t about moving away from the cloud; it’s about determining the most appropriate environment for your data. We help you navigate the options to find the right balance of cloud power and local privacy.

Partner with us to explore:

- Data & SharePoint Integration: How to safely point local or cloud models at your SharePoint libraries to create a secure “Company Brain” tailored to your specific permissions and needs.

- Infrastructure Audit: Evaluating your current fleet to see where you can run AI today. Many workloads run perfectly on modern laptops, saving you from the “GPU tax.”

- Smart Workload Routing: Identifying which tasks – from industrial quality logs to customer service automation – are best suited for the cloud and which should stay local for speed and security.

The most powerful AI strategy is one that fits your business, not just your browser. MTG can work with you to understand your infrastructure, your data silos, and your goals to build an AI roadmap that is cost-effective, fast, regulatory compliant, and perfectly balanced.